I had the opportunity to present at HIMSS 2026 on something that keeps me up at night—how healthcare organizations can actually deploy AI in ways that work. Not the hype, not the theory, but the real infrastructure and governance you need to make this stuff operational.

Here’s my take on what matters.

The Need for Trusted AI

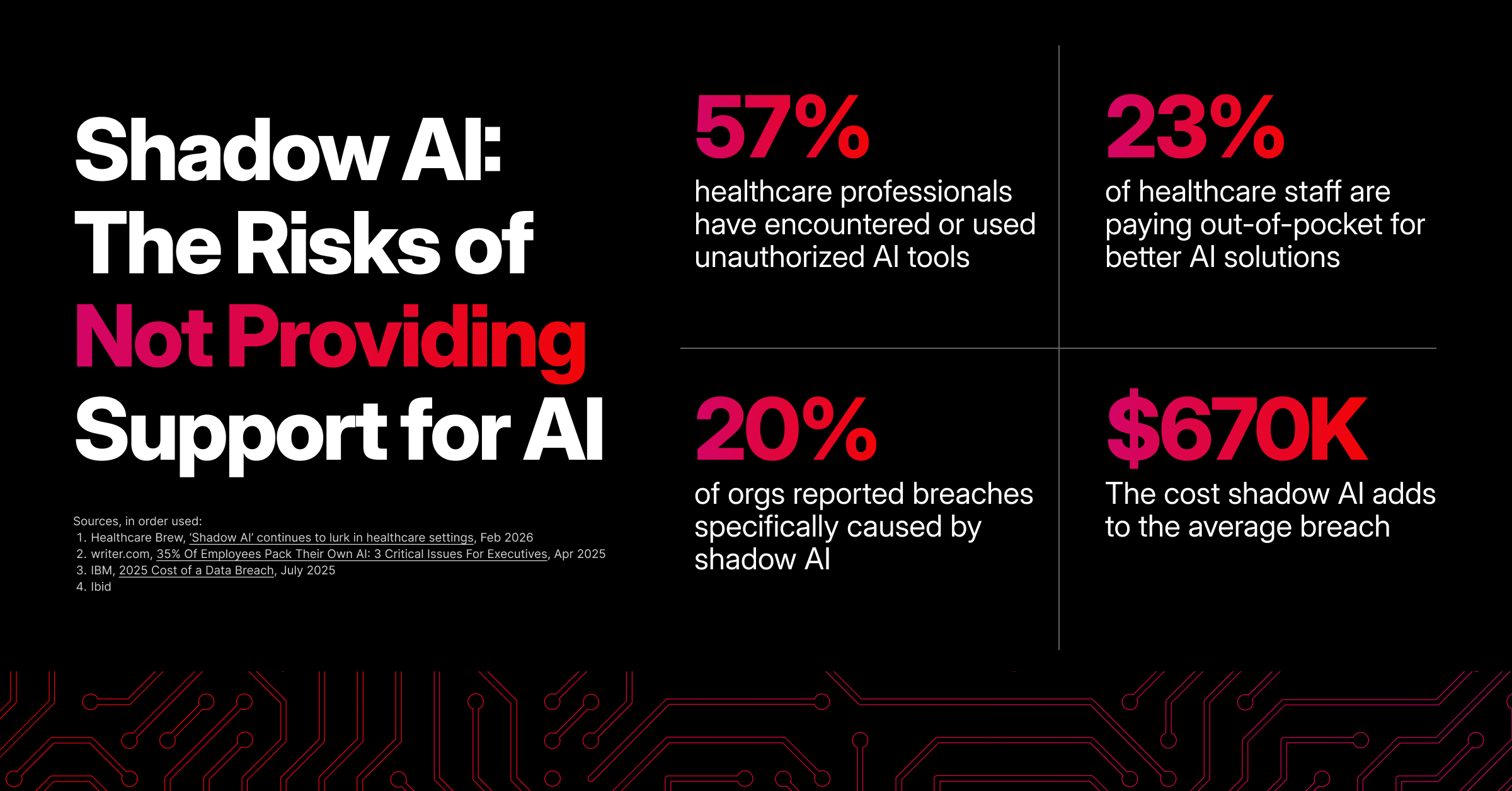

Healthcare has an AI problem, but not the one most people think. The problem isn’t whether AI can help—it obviously can. Better diagnostics, operational efficiency, clinical decision support. That’s the easy part.

The hard part? Most organizations are trying to bolt AI onto infrastructure that wasn’t built for it, with governance frameworks that don’t exist or don’t scale. And in healthcare, you can’t afford to get this wrong. We’re not talking about optimizing ad clicks here—we’re talking about patient lives and data that absolutely must stay secure. We’re talking about AI you can trust.

Seven Practical Attributes for Trusted AI

Here’s a straightforward view of what “trusted AI” means in practice, and how to get there.

- Accurate - Models must be validated against the populations you serve and monitored continuously. Accuracy isn’t a one-time benchmark; it’s an ongoing operational metric.

- Private - Patient data used for training or inference must remain protected throughout the lifecycle. That means secure ingestion, tokenization/anonymization where appropriate, and strict controls on access.

- Governed + Secure - Policies, role-based controls, and secure infrastructure are non-negotiable. Governance needs to cover model development, deployment, and change control.

- Auditable - Every model decision, deployment, and data access must be traceable. Audit trails aren’t optional; they’re the record that lets you explain and defend decisions.

- Flexible - Healthcare workflows vary. Your infrastructure and models must support hybrid and multi-cloud deployments, edge processing for latency-sensitive use cases, and integration with existing EHRs and clinical systems.

- Measurable - Define KPIs up front: clinical impact, error rates, false positives/negatives, latency, and utilization. Instrument everything so you can measure ROI and clinical safety.

- Resilient - Design for failure: redundancy, disaster recovery, and model rollback procedures. AI that stops working or drifts silently is worse than no AI at all.

How to Operationalize these Attributes

Some tips for taking action:

- Start with use cases that align with real organizational priorities and can show measurable value. Skip the AI-for-AI’s-sake projects.

- Build cross-functional teams. AI governance is not just a tech problem—you need clinical, IT, security, legal, and compliance all at the table.

- Put model validation and monitoring pipelines in place before deployment.

- Deploy secure, end-to-end data pipelines and strict access controls. Assume data will be used across multiple phases and protect it accordingly.

- Invest in training so your staff understands what AI can and can’t do. Unrealistic expectations are just as harmful as ignoring the technology entirely.

- Use a hybrid approach to infrastructure so you can keep sensitive workloads on-prem or in a controlled environment while leveraging cloud elasticity where appropriate.

- Pilot incrementally with clear success criteria. Measure, iterate, then scale. Build rollback and incident playbooks into every deployment. Rushing this is how you end up with expensive failures.

Infrastructure: Get This Right First

You can’t deploy AI successfully on inadequate infrastructure. If the foundation isn’t there, you’re setting yourself up for failure.

What does “right” look like?

- Compute resources that can actually handle AI workloads without breaking everything else

- Secure data pipelines from end to end

- Hybrid/multi-cloud strategies that give you flexibility without sacrificing control

- Edge capabilities for use cases that need real-time processing

And here’s the key: it has to scale. Whatever you build today needs to handle the AI capabilities coming in six months, a year, two years. This space is moving fast.

Here’s What It Comes Down To

AI in healthcare has real potential. But potential doesn’t mean much if you can’t operationalize it in ways that maintain patient trust and provider confidence.

This isn’t about having the fanciest algorithms or the most powerful computing. It’s about deploying AI responsibly in an environment where the stakes are higher than almost any other industry.

Healthcare organizations already know that their standards are higher than almost any other industry because of their focus on patient safety, data security, and regulatory compliance. You don’t need someone telling you what bar to meet. You need infrastructure and governance frameworks that actually let you meet the bar you’ve already set.

That’s what we do at Expedient. We’ve built our infrastructure specifically for organizations that can’t afford to compromise on security or compliance.

The organizations that will succeed are the ones building strong foundations—robust infrastructure combined with governance frameworks that actually work. The ones that rush forward without these elements are setting themselves up for security incidents, compliance problems, and erosion of the trust that healthcare depends on.

To set your organization up to succeed, sign up for a free trial of AI CTRL for 3 risk-free months.